Databricks Pricing Guide (2026): DBU Costs, Tiers & How to Cut Your Bill

There’s no way to sugarcoat it: Databricks pricing is confusing. If you’ve ever opened the Databricks pricing page and thought, “So how much is this actually going to cost me?” — you’re not alone. Between Databricks Units (DBUs), multiple compute types, three pricing tiers, and infrastructure costs that aren’t even billed by Databricks, figuring out your real bill can feel like a machine learning problem in itself.

But don’t worry! That’s exactly why this guide exists.

Whether you’re a data engineer trying to understand why your cluster costs so much, a FinOps practitioner building a cost model, or an engineering manager evaluating whether Databricks is worth it — this guide will walk you through everything you need to know. We’ll cover how the DBU billing model works, break down costs by compute type and cloud provider, walk through real-world pricing examples, and give you actionable tactics to cut your Databricks bill starting today.

How Databricks Pricing Works: The DBU Model Explained

Before we get into specific numbers, you need to understand one concept that underlies all of Databricks pricing: the Databricks Unit, or DBU. Once this clicks, the rest of the pricing structure makes a lot more sense. This DBU-based approach is the core of the databricks pricing model, and once you understand it, the rest of the math gets a lot easier.

What Is a DBU (Databricks Unit)?

A DBU is Databricks’ unit of processing capability, consumed per hour of compute usage. Think of it like a token that measures how much compute power your workload is using — and then Databricks multiplies your DBU consumption by the dollar rate for that compute type to calculate your cost.

The formula is simple:

DBU Formula: Cost = DBUs consumed × DBU rate per hour

What’s less simple is that every compute type has a different DBU rate, and those rates vary by pricing tier (Standard, Premium, or Enterprise) and by cloud provider (AWS, Azure, or GCP). We’ll get into all of those details shortly.

How Databricks Bills You: Per-Second Pricing & On-Demand vs. Committed Use

Here’s some good news: Databricks uses per-second billing, so you’re only paying for the exact time your clusters are running — not rounding up to the nearest hour like the old days of cloud computing. There are no upfront costs and no mandatory recurring contracts on the on-demand plan.

That said, you can unlock significant discounts in two ways:

- Pre-purchase commitments. Commit to a certain level of annual DBU usage and you’ll get a lower rate. The larger the commitment, the better the discount — and commitments can be used across multiple clouds.

- Spot or Preemptible Instances. By running workloads on spare cloud capacity, you can get up to 90% off on-demand pricing. More on this in the optimization section.

If you want to try Databricks before committing to anything, there’s also a free Community Edition (open-source, limited features) and a full 14-day free trial.

Databricks Pricing Tiers: Standard vs. Premium vs. Enterprise

Databricks pricing starts with your tier. This determines which features you have access to and sets the baseline DBU rate you’ll pay — so getting this right matters. If you’re evaluating databricks premium vs standard, the biggest differences are governance, access controls, and auditability—not day-to-day Spark functionality. In other words, you’re not really buying a traditional databricks license; you’re choosing a feature tier that changes what you can do and what you pay per DBU. This is why understanding databricks tiers up front matters before you model costs.

Databricks Standard: What’s Included and Who It’s For

Standard is the entry-level tier and covers the core Databricks functionality: collaborative notebooks, Apache Spark-based compute, Delta Lake, and basic job scheduling. It doesn’t include Unity Catalog, role-based access controls (RBAC), or audit logging.

Standard is a good fit for smaller teams just getting started with Databricks, or for organizations that don’t yet have compliance or governance requirements that need the more advanced security controls.

Databricks Premium: Additional Features & When to Upgrade

Premium is where most serious production deployments land. It adds Unity Catalog (Databricks’ unified governance layer), RBAC, audit logging, table access controls, and additional compliance features. If you’re running Databricks in a regulated industry or you have multiple teams sharing the same workspace, Premium is almost certainly where you need to be.

The DBU rate for Premium is higher than Standard — typically in the 20-30% range depending on the compute type — but the governance capabilities usually justify the cost for teams at scale.

Databricks Enterprise: Custom Pricing & Enterprise-Grade Controls

Enterprise is for large organizations with sophisticated security and compliance requirements. It adds enhanced security controls, dedicated support, and SLA guarantees. Pricing is negotiated directly with Databricks and is contract-based — you won’t find published rates for Enterprise on the pricing page.

If you’re an enterprise customer, your Databricks rep will work with you on a committed-use contract that almost certainly gets you a better rate than on-demand pricing across the board.

| Feature | Standard | Premium | Enterprise |

| Collaborative Notebooks | ✓ | ✓ | ✓ |

| Delta Lake | ✓ | ✓ | ✓ |

| Job Scheduling | ✓ | ✓ | ✓ |

| Unity Catalog | — | ✓ | ✓ |

| RBAC & Table Access Controls | — | ✓ | ✓ |

| Audit Logging | — | ✓ | ✓ |

| Compliance Features | — | Partial | ✓ Full |

| Dedicated Support & SLA | — | — | ✓ |

| Pricing | Published | Published | Custom / Negotiated |

Always refer to the Databricks pricing page for current published rates — they update periodically and the exact DBU rate for your tier and compute type is what ultimately determines your cost.

Databricks DBU Rates by Compute Type (2026)

Here’s where things start to get really important. Not all DBUs cost the same. The DBU rate changes significantly depending on which type of compute you’re using — and the difference between the most expensive and cheapest compute types is often 2-3x. Choosing the right compute type for the right workload is one of the highest-leverage cost decisions you’ll make. Below we break down databricks dbu pricing and the practical databricks dbu cost differences across All-Purpose, Jobs, and SQL workloads.

All-Purpose Compute: Highest DBU Rate, Best for Development

All-Purpose Compute is what runs your interactive notebooks and collaborative development work. It’s the most flexible compute type — great for exploring data, writing code, and building pipelines interactively. It’s also the most expensive, carrying the highest DBU rate of any compute type.

The critical thing to understand: All-Purpose Compute clusters stay running as long as you leave them on. If someone opens a notebook, runs a cell, and then goes to lunch without stopping their cluster, you’re paying All-Purpose Compute rates the entire time. This is the #1 source of preventable Databricks waste. (More on how to fix it in the optimization section.)

Jobs Compute: Lower DBU Rates for Automated Workloads

Jobs Compute is purpose-built for scheduled, automated workloads — your nightly ETL pipelines, data quality checks, model training runs, and so on. The DBU rate for Jobs Compute is significantly lower than All-Purpose, typically in the range of 2-3x cheaper for equivalent compute resources.

This is the single most important compute-type decision you’ll make. If you’re running production pipelines on All-Purpose Compute clusters, you’re almost certainly paying 2-3x more than you need to. More on that in Section 8.

Databricks SQL Warehouses: Classic vs. Serverless DBU Costs

SQL Warehouses are compute resources dedicated to Databricks SQL — running analytical queries against your lakehouse data. They come in two flavors, and the cost profiles are quite different:

- Classic SQL Warehouses. These run on dedicated clusters that you provision and size. You pay DBU charges for the time the warehouse is running (even when it’s idle, unless you configure auto-suspend). They’re predictable in cost and perform consistently.

- Serverless SQL Warehouses. These spin up on demand and shut down when not in use — so you’re never paying for idle time. The DBU rate is higher than classic, but for workloads that are bursty or unpredictable, serverless often wins on total cost because you’re only paying for actual query time.

The right choice depends entirely on your query patterns. Consistent, high-frequency SQL workloads often favor Classic. Bursty, ad-hoc analytics often favor Serverless. We’ll dig into this more in Section 7.

Delta Live Tables & Model Serving: What DBUs Do They Consume?

Two other compute types worth knowing about:

- Delta Live Tables (DLT). DLT pipelines have their own DBU rate, which sits between All-Purpose and Jobs Compute. There’s also a DLT Enhanced Autoscaling feature that can significantly improve resource utilization for streaming pipelines.

- Model Serving. If you’re using Databricks for ML model deployment, Model Serving endpoints use a separate DBU-based billing model tied to the compute profile (CPU vs. GPU) and the provisioned concurrency of the endpoint.

| Compute Type | Relative DBU Rate | Best For | Idle Cost Risk |

| All-Purpose Compute | Highest ($$$$) | Interactive dev, notebooks | High — stays on until stopped |

| Delta Live Tables | High ($$$) | Streaming / ETL pipelines | Medium |

| Jobs Compute | Low ($$) | Automated, scheduled jobs | Low — terminates after job |

| SQL Warehouse (Classic) | Medium ($$$) | Consistent SQL analytics | Medium — configure auto-suspend |

| SQL Warehouse (Serverless) | Medium-High ($$$) | Bursty, ad-hoc SQL | None — auto-scales to zero |

| Model Serving | Varies by CPU/GPU | ML inference endpoints | Low — provisioned concurrency |

Note: Exact DBU rates change periodically and vary by cloud provider and region. Always check the Databricks pricing page for current figures before building a cost model.

Databricks Pricing by Cloud: AWS vs. Azure vs. GCP

Here’s something a lot of Databricks pricing guides miss entirely: your total cost of running Databricks is not just your DBU bill. Databricks charges you for DBUs. Your cloud provider charges you separately for the underlying compute infrastructure — EC2 instances on AWS, VMs on Azure, or Compute Engine VMs on GCP. Both bills add up to your real total cost.

The split varies by workload, but infrastructure costs commonly account for 50-70% of your total Databricks spend. That means the cloud provider you’re on — and how efficiently you’re using their infrastructure — matters a lot.

Databricks on AWS: Pricing, Graviton2 Advantage & EC2 Costs

AWS is the most popular deployment platform for Databricks. DBU rates on AWS are competitive, and Databricks has done significant optimization work for AWS infrastructure. One notable advantage: Databricks delivers up to 4x better price/performance on AWS for data lakehouse operations using Graviton2 instances, compared to x86-based instances. If you’re specifically researching databricks pricing aws, remember your total bill is Databricks DBUs plus EC2 and related AWS infrastructure—and that’s where most surprises come from. In practice, aws pricing databricks comparisons come down to instance family choices (like Graviton) and how aggressively you use Spot for fault-tolerant work.

What this means practically: if you’re on AWS and you’re not using Graviton2 instance types for your Databricks clusters, you may be leaving significant performance and cost efficiency on the table. Worth checking your cluster instance type configurations.

Your total AWS Databricks bill = Databricks DBU charges + EC2 instance costs + EBS storage + data transfer. For a typical production deployment, EC2 costs alone can easily match or exceed your DBU charges.

Databricks on Azure: Azure Databricks Pricing & VM Costs

Azure Databricks is deeply integrated into the Azure ecosystem — which means it plays well with Azure Data Factory, Azure DevOps, and Microsoft Entra ID for authentication. For organizations already standardized on Azure, this native integration is a genuine advantage. For teams searching databricks pricing azure, the key is modeling DBU charges separately from Azure VM, storage, and networking costs.

Azure Databricks pricing follows the same DBU structure, but the underlying VM costs are billed separately through your Azure subscription. One lever worth knowing: Azure Reserved VM Instances (1-year or 3-year commitments) can significantly reduce your infrastructure costs if your Databricks compute usage is predictable. The DBU charges stay at your Databricks contract rate while the VM costs drop substantially with a reservation.

Databricks on GCP: DBU Rates & Committed Use Discount Interaction

GCP is the smallest of the three Databricks cloud deployments, but it’s a fully supported and production-ready option. DBU pricing on GCP follows the same structure as AWS and Azure. If you’re evaluating databricks gcp pricing, double-check how your Compute Engine commitments apply (or don’t) to Databricks-managed infrastructure.

One gotcha to watch out for: GCP Committed Use Discounts (CUDs) for Compute Engine VMs don’t automatically apply to Databricks-managed infrastructure by default. Check with your GCP account team on how to structure commitments to ensure your CUDs are actually reducing your Databricks infrastructure costs.

| Factor | AWS | Azure | GCP |

| DBU pricing structure | Standard DBU model | Standard DBU model | Standard DBU model |

| Infrastructure billing | EC2 (separate) | Azure VMs (separate) | Compute Engine VMs (separate) |

| Best price/performance option | Graviton2 instances | Compute-optimized VMs | N2/C2 instances |

| Infrastructure discount path | Spot Instances / Reserved Instances | Spot VMs / Reserved VM Instances | Preemptible VMs / CUDs (with caveats) |

| Native ecosystem advantage | Broadest AWS service integration | Deep Microsoft / Entra ID integration | BigQuery / Vertex AI integration |

What Drives Your Databricks Costs? 7 Key Factors

Now that you understand the structure, let’s talk about what actually makes your bill go up or down. There are seven main levers that determine how much you pay for Databricks:

- Cluster size. The number of worker nodes and the driver node configuration directly multiply your DBU consumption. Bigger clusters = more DBUs per hour.

- Cluster type and auto-scaling. Fixed-size clusters waste resources when utilization is low. Auto-scaling clusters adjust worker count dynamically, which can reduce costs significantly for variable workloads — but can also spike unexpectedly if not bounded properly.

- Compute type selection. As covered in Section 3, using All-Purpose Compute for workloads that should be on Jobs Compute is one of the most common and expensive mistakes teams make.

- Region. DBU rates and cloud infrastructure costs vary by region. US East (N. Virginia) on AWS is typically the cheapest region. Running workloads in expensive regions like EU or Asia-Pacific can meaningfully increase your bill.

- Databricks Runtime and Photon. Photon (Databricks’ vectorized query engine) runs at a higher DBU rate but dramatically speeds up SQL and DataFrame operations — often consuming fewer total DBUs for the same query workload. For SQL-heavy teams, Photon usually saves money on net.

- Storage. Databricks itself doesn’t charge for storage — but your cloud provider does. Delta Lake data stored on S3, ADLS, or GCS is billed by your cloud provider at their standard object storage rates. Network egress between your Databricks compute and your storage also adds up.

- Workload duration and scheduling. Long-running, inefficient jobs burn DBUs. A job that takes 4 hours to process a daily batch because nobody’s optimized it in 18 months is costing you 4x what an optimized version of the same job might.

Real-World Databricks Cost Example: What a Mid-Size Team Actually Pays

Realistically, how much might a mid-size data engineering team pay for Databricks? Let’s walk through a concrete example with the following assumptions:

- Team setup: 5 data engineers doing interactive development + 3 nightly ETL pipelines running in production

- Tier: Premium (Unity Catalog required for governance)

- Cloud: AWS, US East (N. Virginia)

- Development usage: 5 engineers × 4 hours/day of active All-Purpose cluster usage × 22 working days/month. All-Purpose DBU rate: ~$0.55/DBU. Cluster size: 2-worker nodes consuming 2 DBUs/hour each, plus 1 DBU/hr for the driver.

- Production pipelines: 3 Jobs Compute pipelines × 2 hours/night × 30 nights/month. Jobs Compute DBU rate: ~$0.22/DBU. Each job uses a 4-worker cluster: 4 DBUs/hour workers + 1 DBU/hour driver.

Here’s the cost breakdown:

| Cost Component | DBUs/month | Rate | Monthly Cost |

| Dev (All-Purpose) — 5 engineers | 5 × 4 hrs × 5 DBU/hr × 22 days = 2,200 DBUs | $0.55 | $1,210/month |

| Production ETL (Jobs Compute) — 3 pipelines | 3 × 2 hrs × 5 DBU/hr × 30 nights = 900 DBUs | $0.22 | $198/month |

| Databricks DBU Total | 3,100 DBUs | — | $1,408/month |

| AWS EC2 infrastructure (estimated) | — | — | ~$800–1,200/month |

| TOTAL estimated monthly cost | — | — | ~$2,200–2,600/month |

A couple of things jump out here. First, development (All-Purpose) compute costs more than 6x the production pipeline costs — for a much smaller amount of actual work. Second, AWS infrastructure costs add a substantial amount on top of the Databricks bill. Both of these are optimization targets.

Important note: These are approximate rates for illustration purposes. Actual DBU rates vary by tier, region, and any committed-use discounts you’ve negotiated. Always check the current Databricks pricing page for published rates.

How to Use the Databricks Cost Calculator (Step-by-Step)

Databricks provides an official pricing calculator at databricks.com/product/pricing. Here’s how to get a useful estimate out of it:

- Step 1: Select your cloud provider (AWS, Azure, or GCP) and region.

- Step 2: Choose your pricing tier (Standard or Premium — Enterprise requires a custom quote).

- Step 3: Select the compute type (All-Purpose, Jobs, SQL, etc.) and input your estimated usage hours and cluster size.

- Step 4: The calculator outputs your estimated monthly DBU cost for that specific workload. Repeat for each distinct workload type and sum them up.

Databricks Calculator Limitations: What It Doesn’t Tell You

The official calculator is useful but has some real blind spots. Here’s what it won’t show you:

- Cloud infrastructure costs. EC2, Azure VMs, and GCP Compute Engine charges are not included. For most teams, these add 50-100% on top of the DBU number the calculator gives you.

- Storage and egress costs. Delta Lake storage on S3/ADLS/GCS and cross-region data transfer costs aren’t factored in.

- Idle cluster waste. The calculator assumes you’re running the hours you input. It has no way to account for the fact that your team’s All-Purpose clusters are probably running longer than they need to.

- Multi-workload blended costs. If you have a dozen different job types, manually inputting each one and summing the results gets tedious fast.

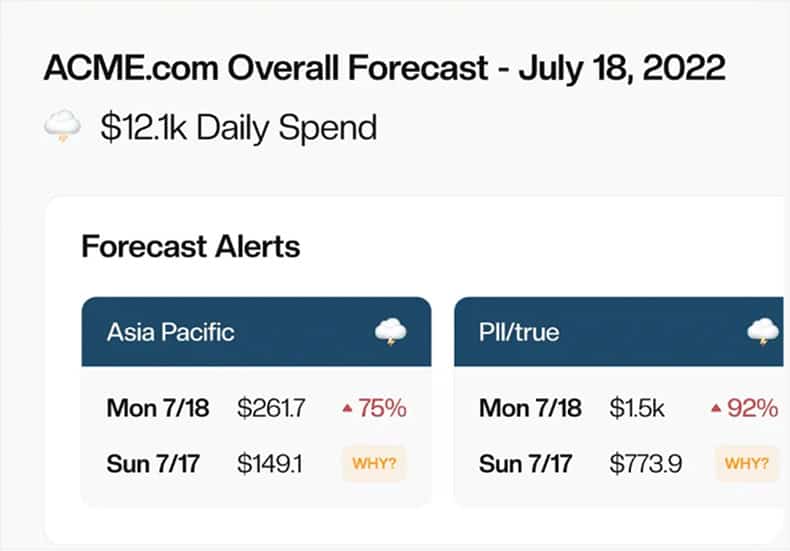

This is where a tool like CloudForecast can help — giving you a real-time, granular view of your actual Databricks spend across workloads, teams, and accounts, so you’re optimizing based on what’s actually happening rather than a static estimate.

Databricks Serverless Pricing: SQL Warehouses & Serverless Jobs

Serverless SQL Warehouses: No Idle Costs, But at What Price?

Classic SQL Warehouses run on clusters you provision — which means you’re paying even when the warehouse is sitting idle (unless you’ve configured auto-suspend, and even then there’s a startup delay when the next query comes in). Serverless SQL Warehouses eliminate that problem entirely: they scale to zero when not in use and spin up in seconds when a query arrives.

The tradeoff is a higher per-DBU rate for Serverless vs. Classic. So which one is cheaper? It depends entirely on your query patterns:

- Consistent, high-frequency queries (e.g., a BI dashboard with dozens of users querying all day): Classic often wins. The warehouse is nearly always active, so idle costs are low and the lower Classic DBU rate saves money.

- Bursty, ad-hoc queries (e.g., data analysts who query intensively for 2 hours in the morning and then leave it alone): Serverless almost always wins. You’re not paying for 6 hours of idle time.

If you’re not sure which pattern fits your team, start with Serverless. You can always switch to Classic once you have enough usage data to model the comparison accurately.

Databricks Serverless Jobs: Instant Compute With Per-Workload Billing

Serverless Jobs is a newer Databricks feature that extends the serverless model to automated job compute — not just SQL. Instead of pre-provisioning a Jobs Compute cluster for each pipeline, Serverless Jobs spins up compute on-demand for each job run and terminates it immediately when the job completes.

The advantages are compelling: no cluster startup lag for short jobs, no idle cluster costs between job runs, and per-second billing with zero provisioning overhead. For teams running many short, frequent jobs (under 15-20 minutes), Serverless Jobs can be a meaningful cost and operational improvement over classic Jobs Compute.

The per-DBU rate for Serverless Jobs is higher than classic Jobs Compute, but the elimination of idle and startup overhead often makes the math work in its favor for the right workload profile.

8 Ways to Reduce Your Databricks Costs Right Now

This is the section that really matters. Understanding the pricing structure is great — but actually cutting your bill is better. Here are eight tactics you can act on immediately, roughly ordered from highest to lowest impact.

1. Switch Production Workloads From All-Purpose to Jobs Compute

This is almost always the biggest single cost reduction available to teams that haven’t already done it. If you’re running scheduled ETL pipelines, data quality checks, or model training jobs on interactive All-Purpose clusters, you’re paying 2-3x more than necessary for the same work.

Jobs Compute clusters are purpose-built for automated workloads, carry a substantially lower DBU rate, and terminate automatically when the job finishes — eliminating idle cost entirely. Migrating a pipeline from All-Purpose to Jobs Compute is usually a trivial configuration change in your Databricks job settings, not a code change.

If you do nothing else from this list, do this.

2. Set Auto-Termination on Every Interactive Cluster

Idle interactive clusters are the #1 preventable source of Databricks waste. By default, Databricks does not set an auto-termination timeout — which means an All-Purpose cluster will happily run forever if nobody shuts it down.

Set a sensible auto-termination timeout on every interactive cluster. Thirty minutes is a reasonable default for most development clusters; you can set it shorter for shared clusters where spinning up quickly is less of a concern. This single change can cut your All-Purpose Compute bill by 20-40% in teams where people routinely leave clusters running.

3. Use Spot or Preemptible Instances for Fault-Tolerant Jobs

Who doesn’t love a 90% discount? Spot Instances (AWS), Spot VMs (Azure), and Preemptible VMs (GCP) offer exactly that for compute capacity that can be interrupted. For ETL pipelines and batch jobs that can handle interruptions and retries gracefully, Spot is one of the most aggressive cost levers available.

A practical starting point: run your worker nodes on Spot, but keep the driver node on on-demand. This gives you most of the cost savings while keeping the job orchestration stable. Databricks Jobs automatically handles Spot interruptions with task-level retries, making it well-suited for this pattern.

4. Right-Size Clusters Using Historical Utilization Data

Over-provisioned clusters are everywhere. A team spins up a 10-worker cluster for a job that uses 30% of its capacity, and nobody ever revisits the configuration. Multiply that across dozens of jobs and clusters, and you’re looking at substantial waste.

The fix is to look at your cluster utilization metrics over time — average CPU utilization, memory pressure, and shuffle spill — and resize based on actual usage rather than what someone originally guessed. Tools like Databricks’ built-in Ganglia metrics, system tables, or a cost monitoring platform like CloudForecast can surface this data automatically.

5. Commit to Pre-Purchase DBUs If Your Usage Is Predictable

If you’ve been running Databricks for 3-6 months and your usage is reasonably stable, a pre-purchase commitment can unlock meaningful discounts off on-demand rates. Larger commitments get better rates, and commitments can be used across multiple cloud providers — useful if you’re multi-cloud.

The tradeoff is flexibility: you’re committing to a certain spend level regardless of actual usage. For most teams spending more than $5,000/month on Databricks, the discount math usually justifies making at least a partial commitment for the baseline workloads you know will keep running.

6. Enable Photon for SQL-Heavy Workloads

Photon is Databricks’ native vectorized query engine, written in C++, that accelerates SQL queries and DataFrame operations dramatically. Yes, it has a higher per-DBU rate than standard runtime. But it also runs the same query 2-5x faster — which means you often consume fewer total DBUs for the same workload.

The net result: for SQL-heavy workloads, Photon typically reduces your total DBU consumption enough to more than offset the higher rate. It’s worth benchmarking on your specific workloads, but for teams doing a lot of analytical SQL, Photon is usually a free lunch.

7. Tag Workloads and Teams to Track Cost by Owner

You can’t optimize what you can’t see. Without proper tagging, your Databricks cost report is one big number with no actionability. With tags — applied to clusters, jobs, and SQL warehouses by team, project, or environment — you can see exactly where your DBUs are going.

Databricks lets you attach custom tags to any compute resource. Once tagged, those tags flow through to your cloud bill (AWS Cost Explorer, Azure Cost Management, GCP Billing), letting you build team-level and project-level cost visibility. This is the foundation of any serious FinOps practice for Databricks.

8. Replace Ad-Hoc Notebooks With Scheduled Jobs Compute Pipelines

This one is about addressing a cultural habit before it becomes a structural cost problem. Data engineers often build pipelines interactively in notebooks on All-Purpose clusters, get them working, and then… just keep running them there. The notebook stays scheduled on the All-Purpose cluster because moving it to Jobs Compute requires a bit of extra work that never quite makes it to the top of the backlog.

Make migrating production-grade notebook pipelines to Jobs Compute part of your team’s definition of “done” for any new pipeline. The one-time migration cost is small. The ongoing savings over months and years are significant.

Databricks vs. Snowflake vs. BigQuery: Quick Cost Comparison

If you’re in the evaluation phase and comparing Databricks to alternatives, here’s a high-level cost context. These are fundamentally different architectures with different billing models, so direct apples-to-apples comparisons are tricky — but it’s useful to understand where the costs come from in each case.

| Databricks | Snowflake | BigQuery | |

| Pricing model | DBUs × rate (compute) + cloud infra | Credits × rate (compute) + storage | Per TB scanned (on-demand) or slots (reserved) |

| Primary strength | ML/AI + unified analytics (lakehouse) | Data warehousing & sharing | Massive-scale SQL analytics, GCP native |

| Separation of compute / storage | Compute separate from cloud storage | Full separation | Full separation |

| Best for | Teams doing ML + data engineering + SQL in one platform | Teams focused on structured data warehousing and cross-org data sharing | GCP-native teams doing large-scale analytical SQL |

| Cheapest path | Jobs Compute + Spot Instances + pre-purchase | Auto-Suspend + rightsizing credits | Partition pruning + clustering to minimize bytes scanned |

For teams that need ML and data engineering on the same platform — which increasingly describes most modern data orgs — Databricks’ unified architecture often makes the cost comparison more favorable than it looks on paper, because you’re replacing separate tools rather than adding a new one.

Frequently Asked Questions About Databricks Pricing

How much does Databricks cost per month?

It depends significantly on your usage, tier, and cloud provider. Small teams doing exploratory data work might spend a few hundred dollars per month. Mid-size production deployments typically run in the $2,000–$10,000/month range when you include both Databricks DBU charges and cloud infrastructure costs. Large enterprise deployments can easily reach $50,000–$200,000+/month. The single biggest driver of cost variability is how much All-Purpose Compute you’re using — and whether you’ve done the work to move production workloads to the cheaper Jobs Compute.

What is a DBU in Databricks?

A DBU (Databricks Unit) is Databricks’ unit of processing capability, consumed per hour of compute usage. It’s the fundamental billing unit: your cost equals the number of DBUs consumed multiplied by the dollar rate for that compute type. The DBU rate varies by compute type (All-Purpose, Jobs, SQL Warehouse, etc.), pricing tier (Standard, Premium, Enterprise), and cloud provider and region.

Is Databricks free to use?

Yes, with limitations. Databricks offers a free Community Edition, which is open-source and gives you access to a single-node cluster for learning and experimentation. There’s also a full 14-day trial of the production platform. Past that, Databricks is a paid service billed on DBU consumption plus any underlying cloud infrastructure costs from your cloud provider.

Why is Databricks cheaper than traditional data warehouses?

Databricks claims its lakehouse architecture is up to 12x cheaper than traditional alternatives, largely because it separates compute from storage and lets you use cheap object storage (S3, ADLS, GCS) instead of proprietary storage systems. You also pay only for the compute you use rather than a fixed capacity. Whether that holds true for your specific workloads depends a lot on how efficiently you’re using the platform — idle clusters and wrong compute type choices can erode that cost advantage quickly.

What is the difference between Databricks Standard and Premium?

The main difference is governance and security features. Standard covers core functionality: notebooks, Spark compute, Delta Lake, and job scheduling. Premium adds Unity Catalog (unified data governance), role-based access controls, audit logging, and compliance features. Most teams running Databricks in production with multiple users and data access policies end up on Premium. If you’re a small team with simple access patterns, Standard may be sufficient.

Does Databricks charge separately for storage?

Databricks itself does not charge for storage — but your cloud provider does. Delta Lake data stored on Amazon S3, Azure Data Lake Storage (ADLS), or Google Cloud Storage (GCS) is billed by AWS, Azure, or GCP at their standard object storage rates. For most teams, storage costs are a small fraction of the total bill compared to compute, but they’re worth tracking separately — especially if you’re storing large volumes of historical data or running extensive data retention policies.

Start Optimizing Your Databricks Costs Today

Databricks pricing has a lot of moving parts — DBUs, compute types, pricing tiers, cloud infrastructure costs, serverless options — but once you understand how the pieces fit together, the path to optimization becomes clear.

The three most impactful things you can do right now: move production workloads from All-Purpose to Jobs Compute, set auto-termination on every interactive cluster, and tag your workloads so you know exactly where your spend is going.

And if you want full visibility into your Databricks costs across workloads, teams, and accounts — not just a static estimate from the official calculator — CloudForecast can help. We surface the utilization data, idle waste, and per-team cost breakdowns that turn Databricks cost management from a guessing game into a predictable, optimizable line item.

Blog

More from CloudForecast

Cloud Cost Management is Easy With CloudForecast

We would love to learn more about the problems you are facing around AWS and Azure cost. Connect with us directly and we’ll schedule a time to chat!

Start Free Trial